AgentEvolver: The Future of Autonomous, Self-Evolving AI Agents in 2025

The AI landscape in 2025 is rapidly shifting from static large language model (LLM) applications to intelligent, adaptive agents that learn and improve autonomously.

This evolution addresses critical challenges: high cost of manual data curation, inefficient exploration, and suboptimal sample utilization in reinforcement learning-based agent training. AgentEvolver, an open-source framework released by Alibaba’s Tongyi Lab in late 2025, pushes the frontier by unifying three synergistic mechanisms self-questioning, self-navigating, and self-attributing for continuous, cost-effective autonomous agent improvement. This marks a transformative leap towards scalable, real-world deployable AI agents that grow smarter with less human intervention.

AgentEvolver Architecture Breakdown: Detailed Analysis

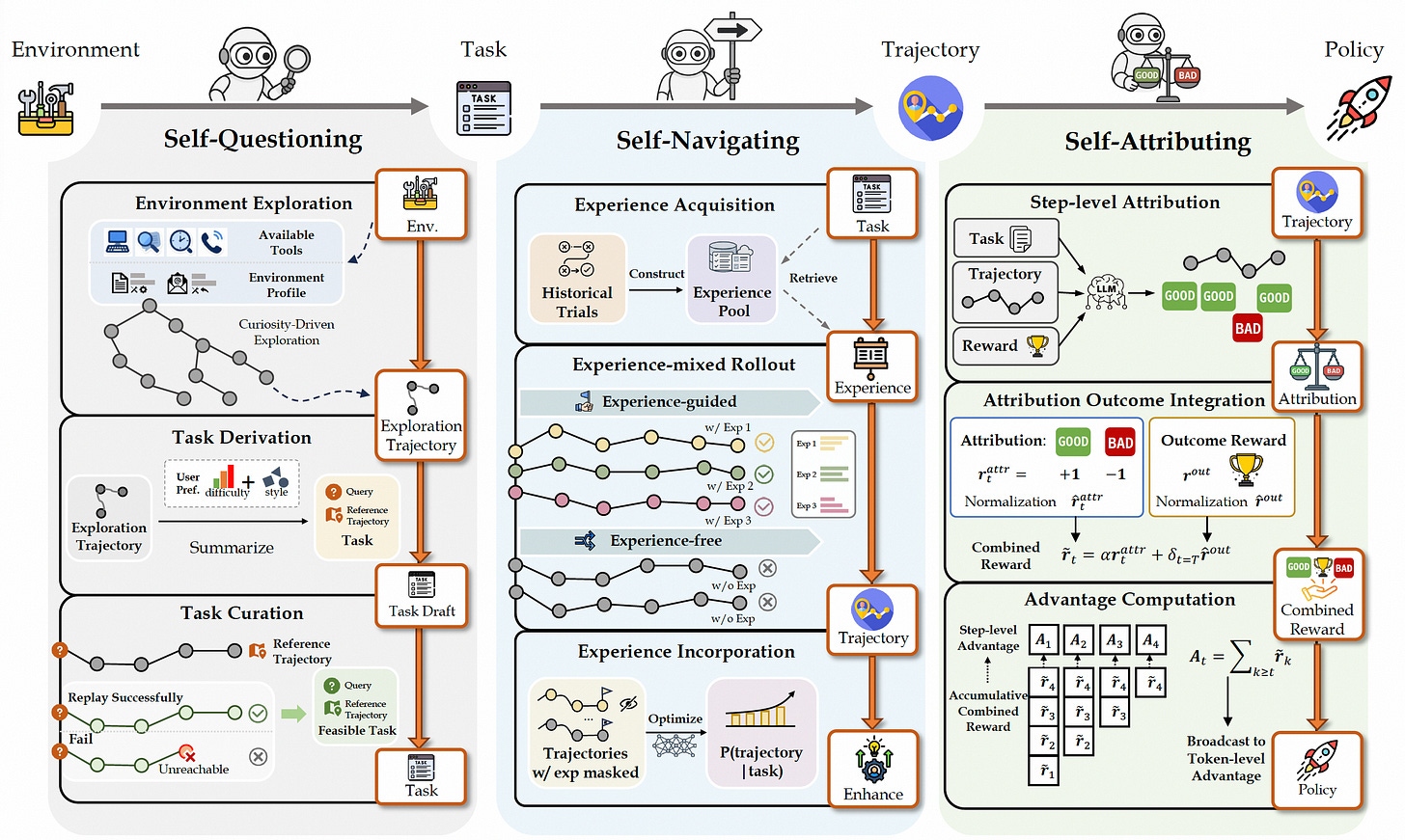

AgentEvolver’s architecture is organized into three synergistic modules Self-Questioning, Self-Navigating, and Self-Attributing which coordinate the autonomous evolution of AI agents & integrates key innovations that surpass traditional RL approaches:

1. Self-Questioning Module

Purpose:

Autonomously explores novel environments and generates actionable tasks, eliminating the need for manual dataset construction.

Components & Flow:

Environment Exploration:

Utilizes available tools and constructs an environment profile. The agent explores its environment using curiosity-driven strategies, generating complex exploration trajectories reflecting its interactions.Task Derivation:

Summarizes exploration data, referencing user preferences (difficulty, style) and derives potential tasks. It queries exploration trajectories to identify new, relevant objectives.Task Curation:

Validates task feasibility by replaying trajectories. Distinguishes between successful runs and unreachable states only feasible, replayable tasks are curated for training.

Inputs/Outputs:

Inputs: Environment’s current state, agent’s toolset

Outputs: Drafted, validated tasks for downstream processing

2. Self-Navigating Module

Purpose:

Enhances exploration efficiency and learning quality by leveraging historical experience and optimizing rollouts.

Components & Flow:

Experience Acquisition:

Aggregates historical trial data in an experience pool for reuse.Experience-mixed Rollout:

Executes new tasks either guided by past experiences (exp-guided) or independently (exp-free). Each rollout corresponds to a unique trajectory, with or without reference to prior attempts.Experience Incorporation:

Analyzes and optimizes collected trajectories, even masking certain experiences to prevent overfitting. Regularly updates the probability distribution (P) of achieving given tasks based on enhanced experience.

Inputs/Outputs:

Inputs: New tasks, stored experience pool

Outputs: Optimized trajectories, enhanced experience records

3. Self-Attributing Module

Purpose:

Implements fine-grained credit assignment and reward integration, which enables efficient, detailed policy optimization.

Components & Flow:

Step-level Attribution:

Assesses each trajectory step using LLM-driven evaluations: good or bad states/actions are identified.Attribution Outcome Integration:

Normalizes step-level outcomes for final reward calculation. Integrates both local (step) and global (trajectory) rewards to derive a combined signal.Advantage Computation:

Calculates the advantage for each agent action using the combined reward over tokens or steps. This is then broadcast to the policy trainer for efficient updates.

Inputs/Outputs:

Inputs: Trajectories, associated tasks, reward functions

Outputs: Action-level advantage scores, updated policy weights

Dataflow Summary

Environment → Self-Questioning: Exploration generates candidate tasks.

Task → Self-Navigating: Tasks are executed with experience guidance, collecting rollouts.

Trajectory → Self-Attributing: Attribution and reward computation optimize policy learning.

Policy Outputs: Updated agent policies feed back into the next cycle of exploration and learning.

Benchmarking Results: AgentEvolver vs. Baseline Models

AgentEvolver demonstrates significant performance gains over traditional agent frameworks and large-scale models while maintaining efficiency and scalability.

Performance Benchmarks:

Evaluated on AppWorld and BFCL-v3, AgentEvolver achieves up to 69.4% best@8 score with 14B parameters outperforming baseline models that require far more resources.Key Results:

On AppWorld, AgentEvolver (14B) reaches 48.7% avg@8 (vs. 18% for Qwen2.5-14B baseline).

On BFCL-v3, AgentEvolver (14B) achieves 66.5% avg@8 and 76.7% best@8, significantly above vanilla RL agents and models.

Even the smaller AgentEvolver (7B) matches or surpasses larger, less specialized models proving that architectural innovation outweighs brute-scale.

Mechanism Impact:

Adding Self-Questioning increases avg@8 and best@8 scores substantially.

Subsequent addition of Self-Navigating and Self-Attributing yields further improvements in both benchmarks.

Key Architectural Strengths:

Modular and service-oriented, enabling seamless upgrades and integration.

Explicit separation of exploration, experience management, and credit assignment for transparency and extensibility.

Fine-grained causal attribution for long-horizon tasks, improving both sample efficiency and safety in real-world deployments.

These mechanisms form a tightly coupled, unified pipeline that leverages the semantic powers of LLMs for environment interaction and policy evolution. Preliminary benchmark results on challenging tasks (AppWorld, BFCL-v3) demonstrate that AgentEvolver surpasses prior baselines using fewer parameters and achieves faster adaptation without expensive manual tuning.

Unlike older agent frameworks or isolated experiments, AgentEvolver also features:

A modular and extensible service-oriented architecture facilitating easy integration with diverse environments and customizable deployments.

A robust context manager supporting multi-turn interactions, which is essential for complex, stateful AI workflows.

Compatibility with reinforcement learning infrastructures like veRL for smooth policy updates.

Real-World Relevance for AI Systems

Enterprises and AI startups can adopt AgentEvolver today to accelerate agentic AI development, especially for applications demanding continual, autonomous skill acquisition:

Automation of complex workflows: In AI operations (AIOps), customer service, knowledge management, and supply chain, agents can self-generate tasks to self-improve their support functions dynamically.

Agent orchestration in multi-agent systems: Self-navigating mechanisms enable agents to share and reuse knowledge gained, optimizing resource use and avoiding redundant exploration.

Adaptive decision-making in changing environments: Self-attributing allows agents to understand the impact of intermediate decisions, improving safety and robustness in real-time scenarios.

Reduced reliance on manual dataset creation or human trainers: Cut costs and expedite development cycles by automating data collection via self-questioning.

Efficient policy refinement with smaller models: Useful for deployment on edge devices or resource-constrained infrastructure.

Actionable Steps & Best Practices

Do now:

Experiment with AgentEvolver’s open-source framework to develop your own self-evolving agents tailored to your domain and environment.

Leverage the modular components: environment service for plugging in your environment, task manager for task generation, and experience manager for reuse of learned knowledge.

Use the provided training pipelines as baselines and customize credit assignment strategies for your use cases.

Avoid:

Dependence on handcrafted datasets and static RL pipelines, as they add cost and reduce adaptability.

Overly complex architectures without modularity AgentEvolver’s design counters that by encouraging extensibility.

Watch out for:

Rapid evolutions in training algorithms that improve sample efficiency and exploration strategies for example, upcoming LLM-level self-evolving approaches integrating all three mechanisms more deeply.

The growing importance of interpretability and safety in credit assignment to prevent unintended behavior in long-horizon tasks.

Closing Takeaway

In 2025 and beyond, truly autonomous AI agents are no longer theoretical. Frameworks like AgentEvolver represent the state-of-the-art in creating cost-effective, scalable, and continually improving intelligent systems. By unifying automatic task generation, experience-guided exploration, and fine-grained credit assignment, this paradigm shifts AI development towards less manual labor and faster deployment cycles. AI founders and developers are encouraged to build on this foundation to power the next wave of real-world adaptive, self-evolving AI solutions.

For engineers and AI builders seeking more technical breakdowns and up-to-date insights into frontier AI systems, stay tuned and consider running your own AgentEvolver experiments now.