Building Scalable and Resilient Infrastructure for AI and ML Pipelines in 2026

If you’re starting out with an AI or ML product idea or looking to scale your organization’s capabilities, designing infrastructure that can seamlessly grow with your demand, stay resilient against failures, and support rapid innovation is crucial for your success. Whether you’re an early-stage startup transforming a concept into a working product, or an established enterprise deploying complex ML workflows, the infrastructure choices you make now will determine how reliably and efficiently your pipelines perform under real-world pressures.

In this post, the focus is on how modern infrastructure solutions—especially Kubernetes and cloud-native tech—empower teams to build fault-tolerant, scalable, and production-ready AI pipelines.

The Imperative of Scalability and Resilience

Scalability means your system can handle growing workloads without degrading performance, while resilience is about ensuring continuous operation despite failures like hardware issues, network outages, or software bugs.

These qualities are critical in AI/ML because:

Data volumes and model complexity keep growing, requiring elastic compute and storage.

Pipelines run 24/7 and must tolerate spikes, failures, and deployment errors gracefully.

Downtime results in lost revenue, poor user experience, and eroded trust.

Legacy infrastructure often falls short by relying on brittle monolithic setups or manual failover mechanisms that don’t scale well or recover quickly.

Why Kubernetes and Cloud-Native Are Game Changers

Kubernetes has emerged as the foundation for scalable and resilient AI/ML pipeline infrastructure. It provides:

Container orchestration: Automates deployment, scaling, and management of containerized workloads.

Self-healing: Automatically restarts failed containers and redistributes workloads.

Rolling updates: Enables zero-downtime deployments and fast rollbacks.

Multi-region support: Supports clusters across availability zones for disaster recovery.

Paired with cloud platforms like AWS, GCP, or Azure, Kubernetes enables global, fault-tolerant infrastructure with rich ecosystem integrations from compute to monitoring.

Essential Building Blocks for Modern AI/ML Infrastructure

High Availability Clusters

Deploy AI pipeline components in multiple nodes/zones/clusters to avoid single points of failure. Use managed Kubernetes services such as AWS EKS, GCP GKE, or Azure AKS for production-grade control planes and autoscaling.

Infrastructure as Code (IaC) and GitOps

Define infrastructure declaratively with tools like Terraform, Pulumi, or Crossplane. Use GitOps workflows (ArgoCD or Flux) to enforce consistent environments, auditability, and rapid rollback capabilities.

Workflow Orchestration and Automation

Leverage frameworks like Kubeflow Pipelines, Apache Airflow, and Metaflow for end-to-end ML workflow orchestration with retries, parallel execution, and monitoring. These tools integrate tightly with Kubernetes and cloud resources to scale workloads on demand.

Distributed Training

Use libraries such as Horovod, PyTorch Distributed Data Parallel (DDP), or TensorFlow’s MultiWorkerMirroredStrategy to accelerate training across GPUs and nodes, reducing time-to-model without sacrificing accuracy.

Model Serving and Monitoring

Modern serving platforms like BentoML and MLflow enable cloud-native, scalable model deployment with real-time adaptive batch sizing and observability. Coupled with Prometheus and Grafana, they provide infrastructure and inference monitoring to catch failures early.

Disaster Recovery and Backup

Incorporate multi-region backups, automated failover mechanisms, and snapshotting to ensure business continuity in large-scale outages. Kubernetes operators and cloud provider services enable automated recovery with minimal downtime.

Pitfalls of Older Tools and Approaches

Traditional VM-based deployments lack agility and automatic scaling, increasing operational overhead during traffic spikes.

Manual pipeline management or script-heavy orchestration without retries and monitoring leads to fragile workflows.

Lack of versioning or environment reproducibility causes drift and unreliability in production.

Absence of integrated security and compliance in early infrastructure often results in later-stage vulnerabilities.

These shortcomings make legacy setups unsuitable for modern AI demands where scale and fault tolerance are non-negotiable.

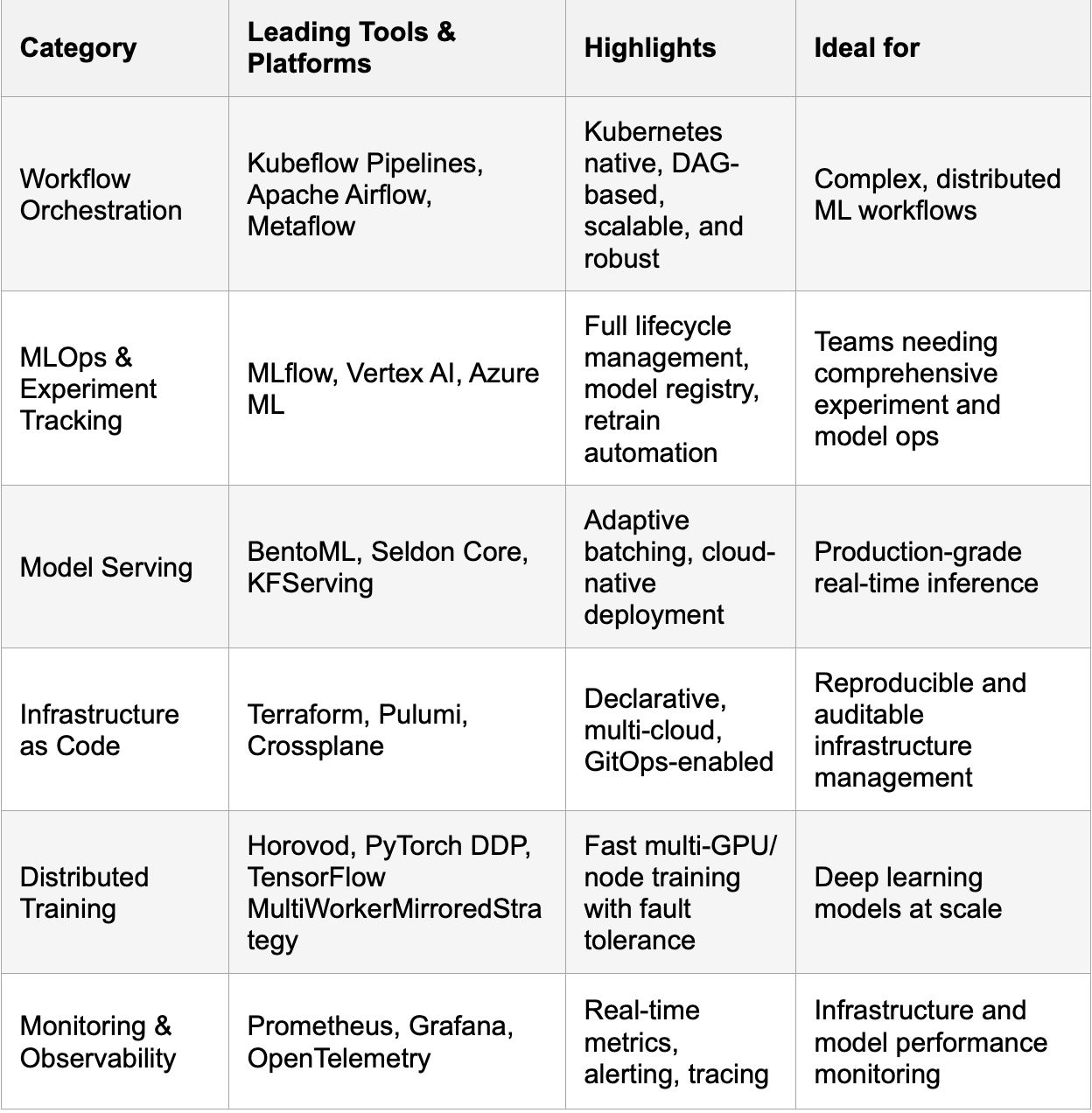

Latest Open-Source and Industry-Leading Tools (2025-26)

Integrating these tools into a unified pipeline supported by cloud-native infrastructure simplifies scaling and resilience for businesses of every size.

Practical Tips for Building Your AI/ML Infrastructure

Start with containerization: Shift workloads to Docker containers early to unlock Kubernetes benefits.

Automate everything: Build CI/CD pipelines for ML code, data, model versions, and infrastructure.

Design for failure: Assume failures will happen; design your system to recover without human intervention.

Use managed services wisely: Leverage cloud provider managed Kubernetes, storage, and monitoring to reduce ops burden.

Invest in observability: Implement telemetry from day one to detect issues before they affect users.

Standardize on GitOps: Use Infrastructure as Code and GitOps for reproducibility, governance, and easy rollback.

Experiment with serverless for lightweight tasks: Cloud Functions or Lambdas can cost-effectively handle event-driven or short-lived ML steps.

Conclusion

Building scalable and resilient infrastructure is foundational for successful AI and ML deployments. Combining Kubernetes, cloud-native principles, automation, and modern open-source tools empowers developers and companies to handle peak loads, recover from failures, and innovate rapidly. By investing in these strategies today, your AI pipelines become future-proof engines that scale seamlessly from concept to production at any scale.