DeepSeek V3.2: The Open-Source AI Model Beating GPT-5.1 and Claude Opus 4.5

When DeepSeek quietly released V3.2 on December 1, 2025, it didn’t just announce a new language model. It fundamentally challenged the assumption that frontier AI requires billion-dollar budgets and closed-source secrecy.

For the first time, an open-source model has achieved gold-medal performance across elite international competitions, earned pricing that’s 68 times cheaper than Claude Opus 4.1, and delivered capabilities that match or exceed OpenAI’s GPT-5.1 on hard reasoning tasks.

This isn’t hype. This is a watershed moment in AI development that deserves your attention.

What Exactly is DeepSeek V3.2?

DeepSeek V3.2 is a 671-billion parameter language model trained by DeepSeek, a Chinese AI research organization founded in 2023 by Liang Wenfeng (CEO of High-Flyer, a quantitative hedge fund managing $8 billion in assets).

The key word here is open-source. Unlike GPT-5.1 or Gemini 3.0 Pro, DeepSeek V3.2 is available under the MIT License. You can download the model weights, run it locally, fine-tune it for your specific needs, and deploy it to production without licensing fees or API rate limits.

Two variants address different use cases:

DeepSeek-V3.2: The general-purpose version balancing reasoning capability with computational efficiency

DeepSeek-V3.2-Speciale: The frontier reasoning variant optimized for maximum performance on hard problems

The Breakthrough Innovation: Sparse Attention

The technical innovation that makes V3.2 remarkable is called DeepSeek Sparse Attention (DSA).

Traditional transformer models use “dense attention” where every token in the input pays attention to every other token. This creates a computational bottleneck. Processing a 128,000 token document isn’t just 128x harder than processing 1,000 tokens. It’s exponentially harder because of the O(n²) complexity.

DeepSeek’s sparse attention works differently. Instead of having every token attend to everything, it intelligently routes attention to only the most relevant tokens. Here’s how:

A lightweight “lightning indexer” rapidly computes relevance scores using minimal computation and lower precision (FP8 instead of FP32)

Only tokens scoring above a relevance threshold actually receive full attention from the model

This reduces computational complexity from O(n²) to approximately O(k·L), where k is a small subset of tokens

The result? 2-3x faster inference, 30-40% less memory consumption, and 50% lower API costs compared to the previous V3.1 model with virtually identical output quality.

This is what real optimization looks like. Not bigger. Smarter.

Performance: Where V3.2 Actually Stands

Let’s talk numbers, because that’s where the story becomes compelling.

Head-to-Head Reasoning Benchmarks

Elite Competition Results

This is where things get interesting. DeepSeek V3.2-Speciale achieved gold-medal performance across four major international competitions:

No open-source model has ever achieved this. This means DeepSeek didn’t just match proprietary models on benchmarks. It beat them on the most demanding competition problems on Earth.

Coding and Software Development

On SWE-Bench Verified (which tests if models can generate bug-fixing code that actually passes unit tests):

DeepSeek V3.2: 73.1%

Claude Opus 4.5: 80.9%

GPT-5.1-Codex-Max: 77.9%

Claude Sonnet 4.5: 77.2%

DeepSeek V3.1: 66%

On Aider-Polyglot (multi-file code generation):

DeepSeek V3.2: 74.5%

Long-Context Capability

DeepSeek V3.2 supports a 128,000 token context window. That’s approximately 300-400 pages of text in a single request. You can:

Analyze entire research papers or books without chunking

Process full codebases for analysis and refactoring

Maintain 100+ turn multi-turn conversations in one session

Generate reports from dozens of source documents simultaneously

The Cost Advantage Is Staggering

Here’s where DeepSeek becomes genuinely disruptive.

With prompt caching:

DeepSeek V3.2: $0.028/M (cached input) + $0.42/M output

GPT-5.1: Cache reads at 90% discount

Claude Opus 4.5: Cache reads at 90% discount

Real-World Monthly Costs

Let’s translate this to real money. If you’re processing 1 billion tokens monthly (500M input, 500M output):

An enterprise paying $45,000/month to Claude Opus 4.1 could run the equivalent workload on DeepSeek for under $400. That’s a 99% cost reduction while getting comparable performance on reasoning tasks.

Even more compelling: you can run DeepSeek V3.2 locally on your own hardware. No API calls. No usage tracking. No pricing surprises. Just download the model weights (under MIT license) and deploy.

Model Capabilities Comparison

Strengths and Weaknesses

Open-Source: Why This Matters

DeepSeek released the full model weights on Hugging Face and GitHub. Not a restricted API. Not a chat interface. The actual model.

This means you can:

Fine-tune the model on your proprietary data

Distill V3.2 into a smaller model optimized for your specific use case

Deploy privately in your own infrastructure for data-sensitive applications

Modify the model architecture for specialized tasks

Study the training approach and sparse attention implementation

For healthcare companies handling patient data, financial institutions managing sensitive trading strategies, or governments managing classified information, local deployment isn’t a luxury. It’s a requirement.

DeepSeek made the strategic decision that open science advances AI faster than corporate secrecy. The evidence suggests they’re right.

The Agentic Capabilities You Should Know About

V3.2 is the first DeepSeek model where “thinking” integrates directly into tool use. The model can:

Execute code and observe results in real-time

Search the web and process results

Call calculators and interpret output

Chain multiple tools together while maintaining coherent reasoning

This was trained on 1,800+ synthesized agentic environments and 85,000+ complex agent instructions, including:

24,667 code agent tasks

50,275 search agent tasks

5,908 code interpreter tasks in real Jupyter environments

4,417 general agent tasks

The implication: DeepSeek V3.2 isn’t just a better chatbot. It’s a tool-using agent that can autonomously solve complex multi-step problems.

Who is DeepSeek? Why Should You Trust Them?

DeepSeek was founded in July 2023 by Liang Wenfeng, CEO of High-Flyer (a quantitative hedge fund managing $8 billion). The company operates as a research-first organization, not a commercial venture seeking quick returns.

Liang explained the philosophy bluntly: “I wouldn’t be able to find a commercial reason [for founding DeepSeek] even if you ask me to. Because it’s not worth it commercially. Basic science research has a very low return-on-investment ratio.”

The team assembled PhD graduates from elite Chinese universities (Peking, Tsinghua) motivated by scientific curiosity and overcoming U.S. export restrictions on advanced AI chips. High-Flyer had been accumulating GPUs since 2019, eventually acquiring approximately 50,000 GPUs (primarily H800s, China’s access-restricted alternative to H100s).

This funding model enabled something unusual: computational abundance without commercial pressure. They could experiment freely, pursue unconventional ideas, and share results openly because profit margins weren’t the constraint.

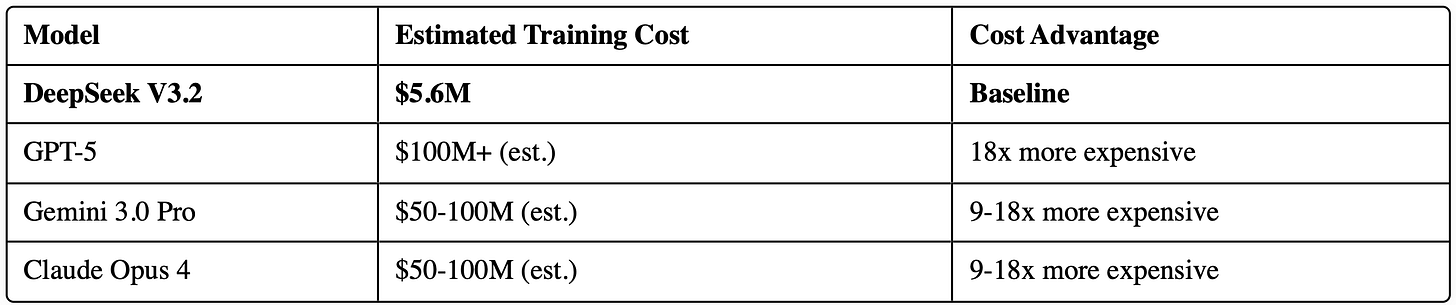

The Efficiency Story: 671B Parameters, $5.6M Training Cost

Here’s the part that should concern Silicon Valley: DeepSeek V3.2 was trained for approximately $5.576 million using 2.788 million GPU hours.

Training Cost Comparison

How is this possible? Through architectural innovation and efficient training:

Mixture-of-Experts (MoE): Only 37 billion parameters activate per token, not all 671B

Sparse Attention (DSA): Dramatically reduced training compute requirements for long sequences

Group Relative Policy Optimization (GRPO): More efficient reinforcement learning without separate critic models

Expert Choice Routing: Optimal load balancing preventing redundancy

The message is clear: throwing more money and more GPUs at the problem isn’t the only path to frontier AI. Architecture and algorithmic innovation matter. A lot.

The Limitations (Be Honest About These)

V3.2 isn’t perfect. Here’s what it can’t do:

No Native Vision/Multimodality: V3.2 is text-only. It can’t natively process images, videos, or audio. While document inlining workarounds exist, this is a significant gap versus GPT-5.1, Claude Opus 4.5, and Gemini 3.0 Pro.

Speciale Doesn’t Support Tools: The high-reasoning Speciale variant specifically lacks tool-calling capabilities. It’s optimized for pure reasoning only.

Context Window Degradation: While technically supporting 128K tokens, attention quality degrades after 20-25K tokens. Reliable performance is limited to approximately 4K tokens at the start and end of sequences.

Hardware Requirements: Optimal deployment requires enterprise-grade GPUs (H100, H200). Running V3.2 locally requires significant infrastructure investment.

Experimental Status: V3.2-Exp was explicitly positioned as an intermediate step. Expect further iteration and refinement.

Real-World Applications

For Software Development Teams

Use V3.2 for code review, refactoring, and bug fixing (73.1% on SWE-Bench)

Generate boilerplate code and architecture recommendations

Cost: $1-5 per pull request (vs. $10-50 with Claude Opus 4.5)

For Healthcare Organizations

Analyze medical research papers and clinical studies

Generate treatment summaries from patient records

Process long medical documentation privately (local deployment)

For Financial Services

Analyze earnings calls and market reports

Detect anomalies in trading patterns

Process regulatory documents and compliance checks

High-Flyer’s heritage means Finance is a strength

For Education

Generate adaptive assessment problems

Create personalized study materials

Explain complex concepts at multiple difficulty levels

For Enterprise Automation

Multi-step workflow orchestration

Complex data extraction and transformation

Code generation and system administration

The Bigger Picture: What This Means for AI

DeepSeek V3.2 signals three major shifts:

1. Open-Source Can Match Closed-Source

Frontier performance is no longer locked behind proprietary APIs. If you have compute and talent, you can build world-class models and release them freely.

2. Efficiency Beats Scale

Bigger and more expensive doesn’t automatically mean better. Architectural innovation (sparse attention, expert routing, training algorithms) can match or exceed brute-force computation scaling.

3. Geography Matters Less

A team in China working around U.S. export restrictions achieved frontier results faster than well-funded Silicon Valley labs. The geography of AI innovation is shifting.

For developers: Your favorite AI tools might be rebuilt on DeepSeek by next year. Expect to see V3.2 integrated into VS Code, GitHub Copilot competitors, and enterprise AI platforms because the cost advantage is too significant to ignore.

For enterprises: You have optionality now. You don’t have to choose between OpenAI, Google, and Anthropic. DeepSeek is a legitimate third path with different tradeoffs (cheaper, open-source, locally deployable, but no vision/multimodality).

For researchers: The technical papers are worth reading. The sparse attention mechanism, group relative policy optimization, and expert routing innovations solve real problems that other labs are also tackling.

How to Get Started with DeepSeek V3.2

Option 1: API Access (Easiest)

POST https://api.deepseek.com/chat/completions

Authorization: Bearer YOUR_API_KEY

Pricing: $0.28/M input, $0.42/M output

Time to first result: 5 minutes

Option 2: Local Deployment

# Download model weights from Hugging Face

huggingface-cli download deepseek-ai/DeepSeek-V3.2

# Run with vLLM (optimized inference engine)

python -m vllm.entrypoints.openai.api_server \

--model deepseek-ai/DeepSeek-V3.2 \

--tensor-parallel-size 8

Time to first result: 1-2 hours (depending on your hardware)

Option 3: Web Chat

Visit deepseek.com and start chatting immediately

Time to first result: 0 minutes

The Bottom Line

DeepSeek V3.2 is the first open-source model to achieve genuine frontier performance. It’s cheaper than every major competitor. It’s available under an unrestricted license. And it works.

If you’re:

Building AI applications: You should at least evaluate V3.2 for cost savings

Concerned about data privacy: Local deployment is now viable for frontier-level performance

Interested in how frontier AI actually works: Study their sparse attention implementation

Working in competitive programming or mathematics: This model is specifically excellent for these domains

The era of AI being exclusively controlled by OpenAI, Google, and Anthropic just ended.

What’s remarkable isn’t that DeepSeek built a great model. What’s remarkable is that they built a great model and gave it away.

The technology is moving fast, and this landscape will change significantly over the next 12 months. But one thing is certain: open-source AI just became impossible to ignore.